Pharmaceutical manufacturers today rely on process-related statistics for sound decision making related to Quality by Design (QbD), process improvement initiatives and investigations. They pull data from disparate sources across manufacturing networks to populate graphs, charts and predictive models designed to alert teams to potential problems and prevent unwanted batch outcomes.

Valuable insights help determine whether a particular process change or preventative action is worth the required time and cost. Useful as they are, statistics overwhelm most non-statisticians in manufacturing, especially when teams are thrown into the process analysis fray with limited background (this may resonate with any of you who say, or know people who say that they “took a stats class in college”).

Large life sciences companies often have upward of 100 statisticians employed on the clinical side, but only a handful of trained statisticians in manufacturing. With so few experts, more manufacturing and quality team members need to become better “data scientists,” armed with a high-level, conceptual understanding that helps gather and analyze the right elements to make better-informed business decisions.

Without returning to a university classroom, you can improve your understanding of statistics to avoid common pitfalls, ask the right questions, and make sound conclusions when statistical results are presented — helping to provide an appropriate check-and-balance for your organization. We provide the following recommendations using simplified examples with an important disclaimer: In practice, some situations can be much more complex and require consulting with a statistician. However, these examples may help you approach experts with the right mindset.

Understand Statistical Errors

Statistical inferences are based on probabilities. What is the chance of a right-handed baseball player hitting a pitch from a lefty? What is the likelihood that you have a car accident on your way home or win the lottery? Statistics allow you to work with probabilities and draw educated conclusions for informed choices. The science of statistics relies on analysis, which encompasses data gathering, organizing, filtering, visualizing and summarizing.

The difference between “inferential” and “descriptive” statistics is a useful starting place. The latter (also known as “summary statistics”) is used for process monitoring (statistical process control) in manufacturing.

Inferential statistics is the science of drawing statistical conclusions from specific data using a knowledge of probability. Typically, inferential stats help answer investigational questions and cover statistical analysis such as t-tests, Analysis of Variance (ANOVAs), multiple regressions and correlations. Insurance companies, for example, use inferential statistics to charge young males with red sports cars a premium over older soccer moms who drive minivans.

In manufacturing, we use a combination of inferential and descriptive statistics for process monitoring and investigations. Have you ever heard someone say they can make statistics look any way they want to support conclusions? This is somewhat true, because there is a degree of error in all statistics. The important goal when using statistics for science-based knowledge is minimizing errors that occur when statistical results differ from what is truly happening on the manufacturing floor.

There are two types of statistical errors to understand. A Type 1 Error, or false positive, incorrectly concludes that there is a difference in yield between sites even though there is no true difference in the manufacturing process.

Conversely, a Type II Error is a false negative, incorrectly concluding there is no difference in yield between sites while a true difference really does exist in the process. Basically, because inferential statistics rely on probabilities to reach conclusions, there is a chance that the results of a statistical test are incorrect. The following describes how you can ask critical questions to help reduce the chance of committing statistical errors, and/or the misinterpretation of statistical results, to improve manufacturing analytics.

Examine Statistical Differences

While you may never strive to be a full-time statistician, as a statistics user presented with a “statistically significant difference or relationship” you should ask the following questions to gain a better understanding of the statistics used to drive decision making.

1. What is the confidence level (alpha level)? What was the sample size?

To evaluate statistical errors, we look to a “magic number” called a confidence level, or alpha (α) level. Typically, an alpha level of .05 is used, meaning there is a 95 percent confidence level that the statistical results were not obtained merely by chance. You can change the confidence level, however, if you are willing to take more or less risk. For example, huge sample sizes increase the likelihood of obtaining statistically significant results. Therefore, you might decrease the alpha level (i.e., increase the confidence level) because you are more likely to find statistically significant differences just by chance with a large sample size.

Ignoring large sample sizes and maintaining a high alpha level is a common mistake that sends investigation teams off and running in “fire drill fashion” to determine root causes of problems that do not really exist (reflected in the Type I Error shown in Figure 1). In Figure 1, where the manufacturer incorrectly concluded a difference in yield between sites, a higher confidence level would lower the chance of finding group differences or relationships. Conversely, small sample sizes can result in overlooking significant differences or relationships that actually exist (reflected in the Type II Error in Figure 1). This occurs because there are not enough observations for the statistical tests to conclude that there are group differences or relationships.

To summarize, the bigger your sample size, the more likely you are to find statistically significant results, and the smaller your sample size, the less likely you are to find statistically significantly findings. As a quick rule of thumb, a sample size should be somewhere between 30 and 500 observations. In manufacturing you often can’t change sample size; however, you can change your alpha level and interpret your results in light of the sample size that you have. In the statistical world, the topic of reviewing confidence levels and sample size to interpret your results in light of the conditions is referred to as statistical power.

2. What sampling method was used?

Sampling techniques are a critical component of manufacturing analy-tics. There are two general categories of sampling methods: (1) random sampling (representative sampling) and (2) nonrandom sampling (purposeful sampling). Determining what type of sampling technique to use is dependent on what you are examining and how you would like to generalize your statistical inferences. As a good consumer of statistics, you should inquire about the sampling method — was it random or non-random? If random sampling was used, how did you select the samples at random? Watch out for answers like “the operator randomly selected them,” because this may or may not be a “truly random” sample. If non-random sampling was used, then ask about the rationale for how the sample was collected. For example, was it collected from the end of the process because that was the focus of an investigation or because that is the only data you had access to?

Also, does the non-random sampling method fit the purpose of the analysis?

Regardless of what type of sampling method is applied, the sample used dictates the frame for the interpretation of the statistical analysis. If data was gathered in 2012, then the statistical interpretation should be restricted to only 2012. If data was only gathered from a single site, then the interpretation of the statistical results should be restricted to that site. While sampling methods can become extremely complex, understanding the rationale for the sampling method selection is critical to the proper use of statistics.

3. What statistical test did you use?

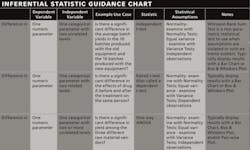

Determining the most appropriate inferential statistical test typically depends on three elements related to what you are asking the data to explain or predict. Before diving into the three elements, however, it is important to grasp the concepts of independent and dependent variables. An independent variable is one that affects an outcome (i.e., changes the dependent variable). A dependent variable is an outcome variable whose value depends on other variables. For example, if you believe large amounts of chocolate pudding increase happiness, the amount of chocolate pudding is your independent variable and happiness is your dependent variable. After identifying your independent and dependent variables, you can go through these three steps to determine the most appropriate statistical test:

• Are you interested in looking at a difference in parameters or a relationship among parameters?

• What type of parameter is your dependent variable(s)? (numeric or categorical)

• What type of parameter is your independent variable(s)? (numeric or categorical)

4. Did you check the statistical assumptions?

Statistics rely on assumptions, and making incorrect assumptions about your data can lead to errors in conclusions due to incorrect interpretation of the statistics. Linearity, independent observations, normality and equal variance (LINE) are assumptions of commonly applied statistical tests (parametric statistics), and ignoring these assumptions can lead to misinterpretation of results.

Comparing yield across three manufacturing sites with an ANOVA test, for example, we assume yield is normally distributed, the observations are independent, and there is similar variance in yield between all of the sites. If any of these ANOVA assumptions are violated, then results may be incorrect.

Statistical errors will continue to run rampant in life sciences manufacturing with today’s point-and-click, data-rich environments making access to statistics much easier. Having a sound working knowledge of statistical best practices and understanding commonly misapplied areas of statistics (sampling, process capability, statistical process control and ANOVA) will better inform your organization’s decisions. Proper interpretation by a trained statistician is always most valuable, but — as a minimum standard — a smart data scientist offers a check-and-balance by asking important questions that help avoid inaccurate conclusions.

About the Authors

Kate DeRoche Lusczakoski, Ph.D., is the Manager of Business Consulting with Aegis Analytical Corp. in Lafayette, Colo., with a doctorate degree in applied statistics/research methods. ([email protected]).

Aaron Spence, M.A., is an Analytics Specialist with Aegis Analytical Corp. in Lafayette, Colo., with a master’s degree in health psychology ([email protected]).